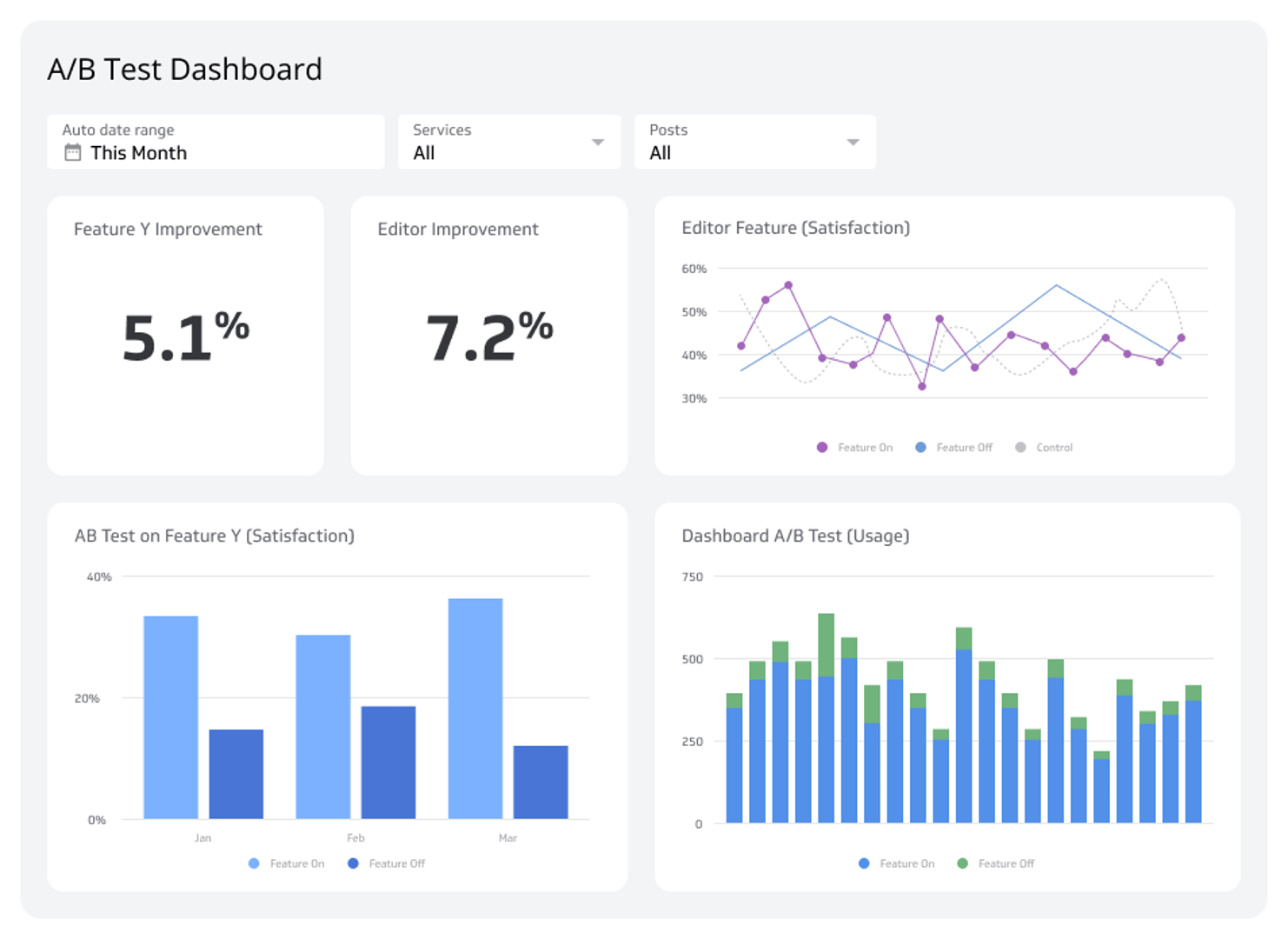

A/B Test Dashboard

Track and measure A/B test results to make data-driven decisions.

What is an A/B Test Dashboard?

Track and measure A/B test results to make data-driven decisions.

Are you confident that your latest feature release or website change is a genuine improvement? Guesswork can be costly. An A/B Test Dashboard removes the uncertainty by providing a clear, real-time view of your experiment's performance. It allows you to track key metrics side-by-side, understand user behaviour, and ultimately prove the value of your projects with hard data. Stop wondering and start knowing which changes truly drive success.

What is an A/B test dashboard?

An A/B test dashboard is a reporting tool designed to track and measure the results of controlled experiments. It presents user feedback and interaction data in a way that balances qualitative insights with quantitative scale, helping you understand not just what users do, but how they feel about the changes you're testing.

This type of dashboard typically monitors the results of A/B tests on specific product features, website elements, or marketing campaigns. It’s an essential tool for measuring whether a variant has improved the user experience or has been a detriment. By visualizing the performance of asset A versus asset B, you can quickly see which version better achieves your goals.

Ultimately, an A/B Test dashboard is a powerful way to communicate key experiment metrics to stakeholders and prove the impact of your work.

Why use an A/B test dashboard?

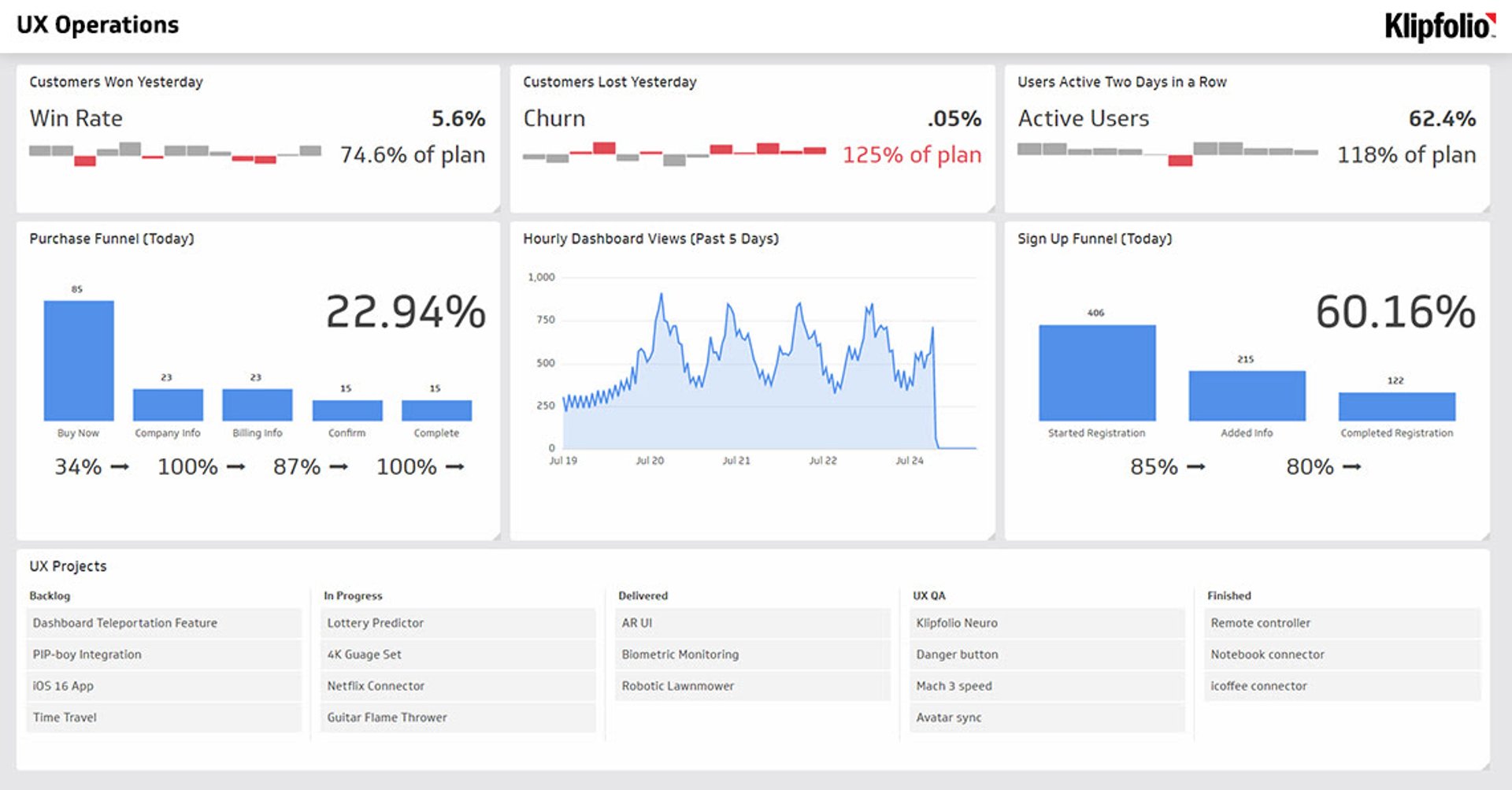

For product, UX, and marketing teams, monitoring how people engage with a product or website is critical. You need to understand the user journey from initial interaction to conversion or churn. Dashboards are the perfect way to measure the impact of changes and validate your hypotheses.

For example, you can use two visualizations to show the difference between the satisfaction ratings for Cohort A versus Cohort B. This can be communicated through:

- Satisfaction improvement percentages: A direct measure of the change in user happiness.

- Weighted satisfaction scores: To account for the varying levels of feedback intensity.

- Comparative bar charts: To clearly visualize the difference in satisfaction scores.

Because many teams run multiple A/B tests simultaneously, SaaS dashboards allow them to efficiently communicate progress and results both within the team and to the wider organization.

Key metrics for your A/B test dashboard

- Weighted Satisfaction Score: This metric provides a nuanced view of user satisfaction by assigning different weights to responses (e.g., "Very Satisfied" is worth more than "Satisfied").

- Positive Satisfaction Rating: A straightforward percentage of users who reported a positive experience, giving you a clear top-line indicator of success.

- A/B Test Feature Funnels: These funnels track how a change impacts critical user journeys and long-term value.

- Edit to Save: Measures the immediate impact on a core user action.

- Edit to 6-month LTV: Connects a feature change to its long-term financial impact.

- Edit to Purchase: Directly ties the A/B test to conversion rates.

- Satisfaction Before and After Introduction: This set of metrics isolates the impact of your change on user sentiment and engagement.

- Weighted Satisfaction: Compares the overall satisfaction score pre- and post-change.

- Satisfied Responses: Tracks the volume of positive feedback.

- Dissatisfied Responses: Monitors for any negative impact on user experience.

- Response Rates Not Affected: Ensures that the change didn't discourage users from providing feedback altogether.

To make confident, data-backed decisions, you need a way to bring all your experiment data together. With a tool like Klipfolio Klips, you can build a custom A/B Test Dashboard to monitor your results in real-time and share insights easily with your entire team.

Related Dashboards

View all dashboards