7 Data Modeling Techniques For Better Business Intelligence

Published 2023-11-27

Summary - Boost your business results with the best data modeling techniques to gain key insights into what is driving your business.

Every day 2.5 quintillion bytes of data are created, and this pace is likewise accelerating at a daily rate. With so much information at our disposal, it is becoming increasingly important for organizations and enterprises to access and analyze relevant data to predict outcomes and improve their services.

However, arbitrarily organizing the data into random structures and connections is not enough. In order to access the data properly and extract the most out of it, data models are essential.

What is Data Modeling?

Data modeling is a crucial process that helps enterprises organize and lay out their information. It involves creating visual representations of how analytics data should be structured to achieve specific business goals. Modeling data and the techniques involved in the data model process can help you understand different relationships, ensure data accuracy in your schema, and enhance decision-making within your teams.

In simple terms, data modeling is nothing but a process through which information is stored within a structured database design. This practice enables organizations to make data-driven decisions and meet their business goals.

However, the entire process of data modeling is not easy as it seems. To propose an analytics solution that aligns with your organization's goals and accomplishes its intended aims, it's crucial to thoroughly comprehend its schema. With this understanding, you can suggest an analytics solution that effectively meets the company's goals and objectives.

How data modeling techniques help businesses organize information

Data modeling is an essential tool for any business that needs to make sense of the vast amount of data available. By creating models, your business can lay out and interpret its data architecture in ways that are useful to them.

Data is also useful for enterprises that want to streamline their processes and reduce costs. For instance, you can create a data model schema that shows how different parts of an organization work together. Managers can even identify inefficiencies and develop solutions in analytics to improve overall productivity.

What is the Importance of Data Modeling?

Below are some of the reasons why your business needs data modeling:

A clear representation of data makes it easier to analyze the data properly. It provides a quick overview of the data which can then be used by the developers in varied applications.

Data modeling represents the data properly in a model. It rules out any chances of data redundancy and omission. This helps in clear analysis and processing.

Data modeling improves data quality and enables the concerned stakeholders to make data-driven decisions.

Since a lot of business processes depend on successful data modeling, it is necessary to adopt the right data modeling techniques for the best results.

Data modeling serves as a compass, guiding organizations in recognizing potential risks and promising opportunities. Businesses can then develop strategies to mitigate those risks or take advantage of new data and opportunities that come their way.

Different Types of Data Modeling Methods

Data modeling techniques are essential tools that guide the structure and organization of data, making it more manageable and useful for decision-making and analysis. Let’s have a look at the commonly used modeling techniques:

- Hierarchical model

- Relational model

- Network model

- Object-oriented model

- Entity-relationship model

- Physical data model

- Logical data model

- Dimensional model

Hierarchical model

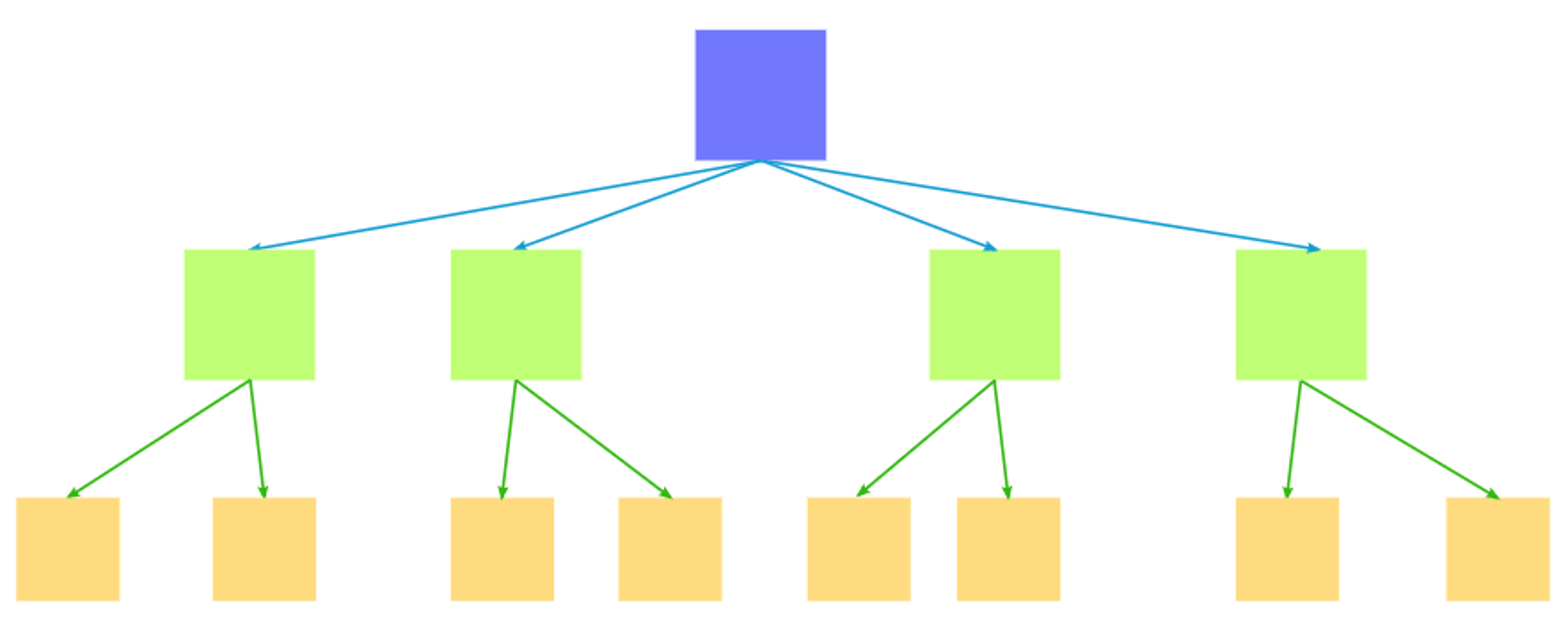

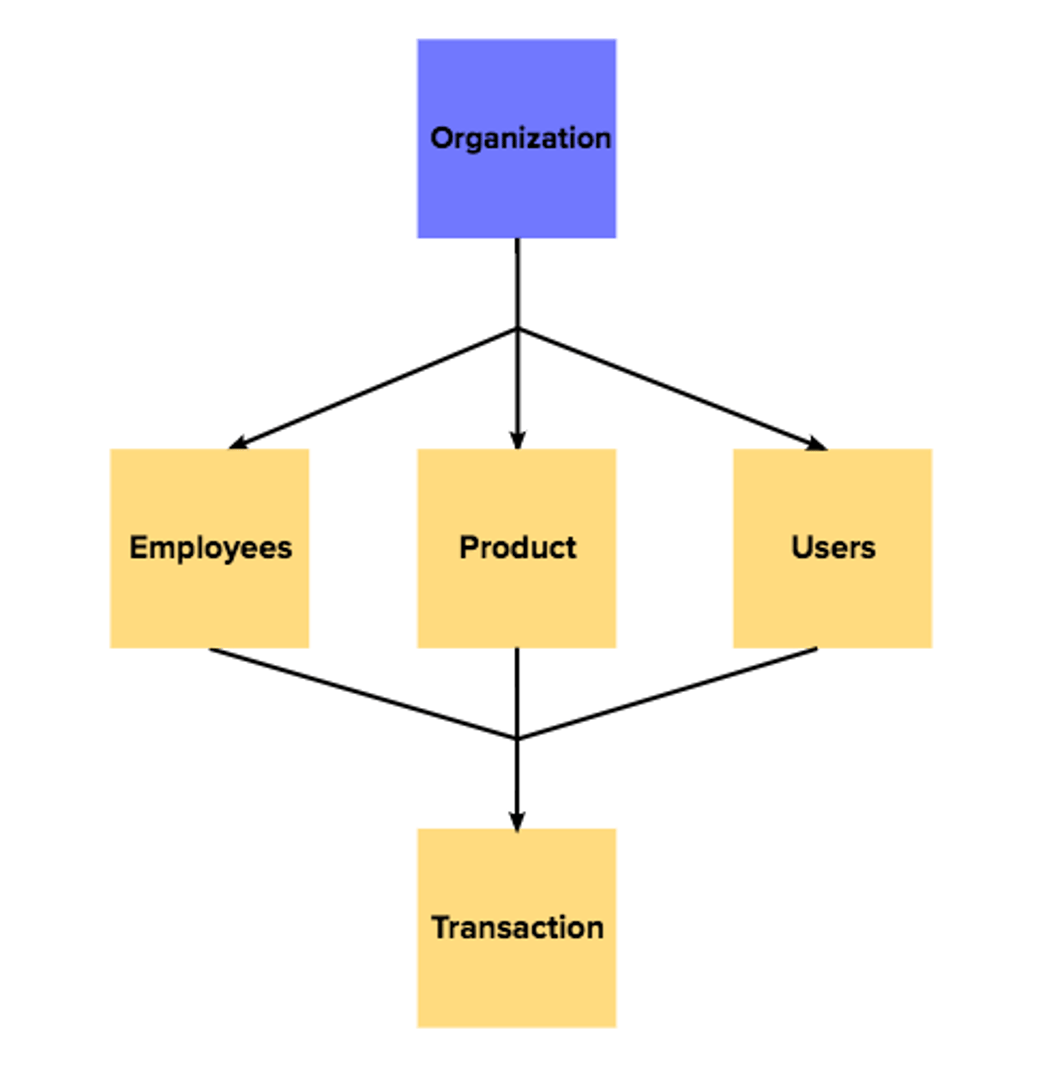

The hierarchical model is reminiscent of a family tree, where data is structured in a top-down manner. Think of this technique as a multi-level pyramid, with each level representing a parent-child relationship.

With this data modeling tactic, data is arranged hierarchically, where each entity has a single parent and multiple children. This technique is apt for databases with clear parent-child relationships, such as organizational structures or file systems. However, its rigid structure can make accommodating changes or additions challenging.

For example, if an employee in a company hierarchy reports to two managers, the model struggles due to dual reporting. Or in a file system, if a file needs to exist in two folders, this model can't easily adapt and would require two copies of the file. This limitation can lead to data inconsistency if one copy gets updated and the other doesn't, which makes these types of models tricky.

Relational model

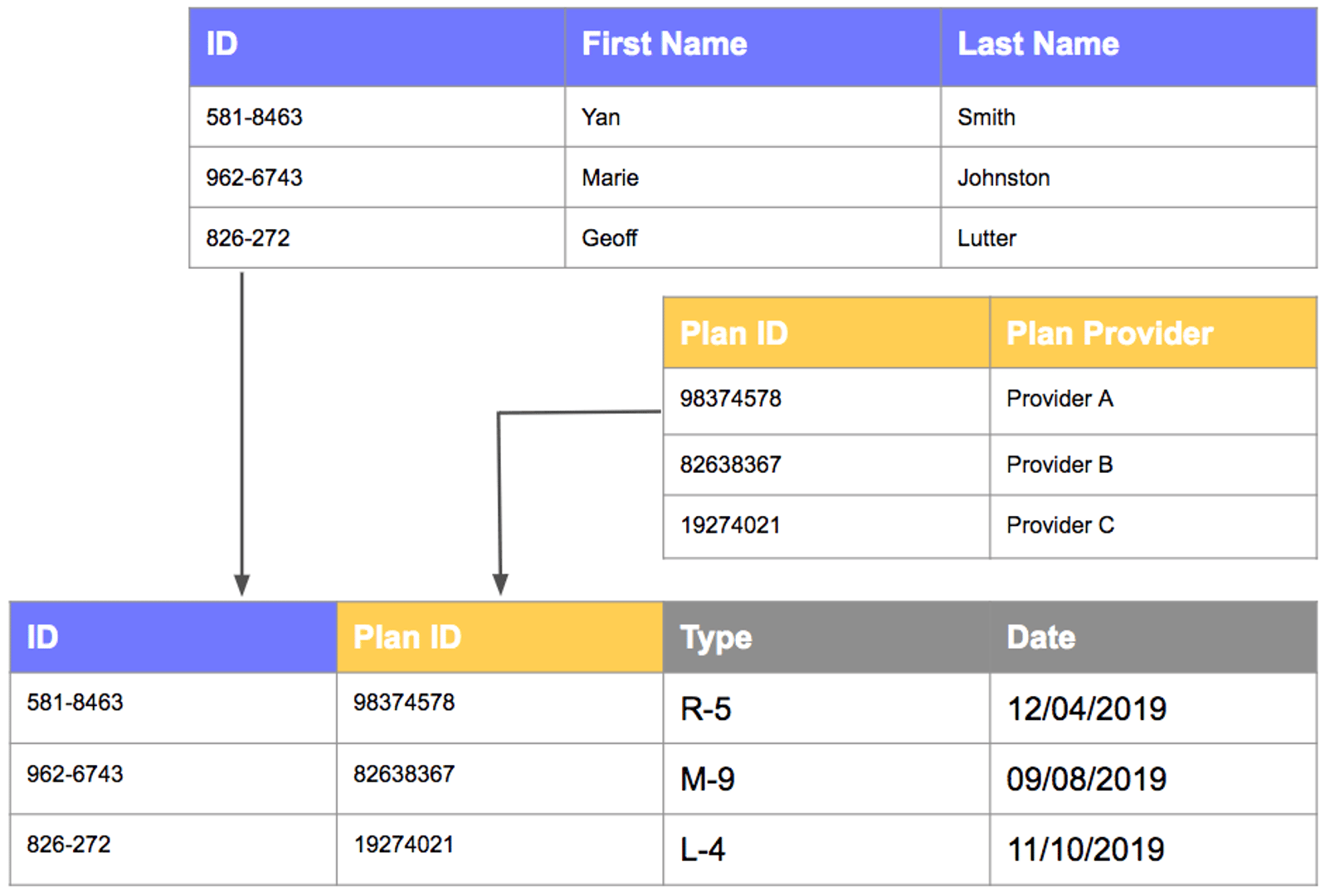

The relational model is the cornerstone of modern data modeling. Imagine a well-organized spreadsheet, with tables representing entities and columns representing attributes. Relational connections between tables are established through something called "keys." The relational modeling technique offers flexibility, enabling efficient querying and analysis. Just as puzzle pieces fit together seamlessly, tables in a relational model can be linked dynamically, fostering robust data interactions.

Relational modeling is a go-to technique for many applications due to its adaptability and scalability. For example, it is used in e-commerce for tracking products and sales, healthcare for managing patient records, banking for transaction histories, and social media platforms for user data and posts.

Network model

The network model resembles a web. Here, information is interconnected through multiple connections. Unlike the hierarchical model, where each child has only one parent, here, your entities here can have multiple parents. This method of data modeling is suitable for scenarios with intricate relationships, such as interconnected supply chains or complex project dependencies.

Much like untangling a web, navigating through the network model can get tricky with larger webs, but it excels in representing more intricate associations.

Object-oriented model

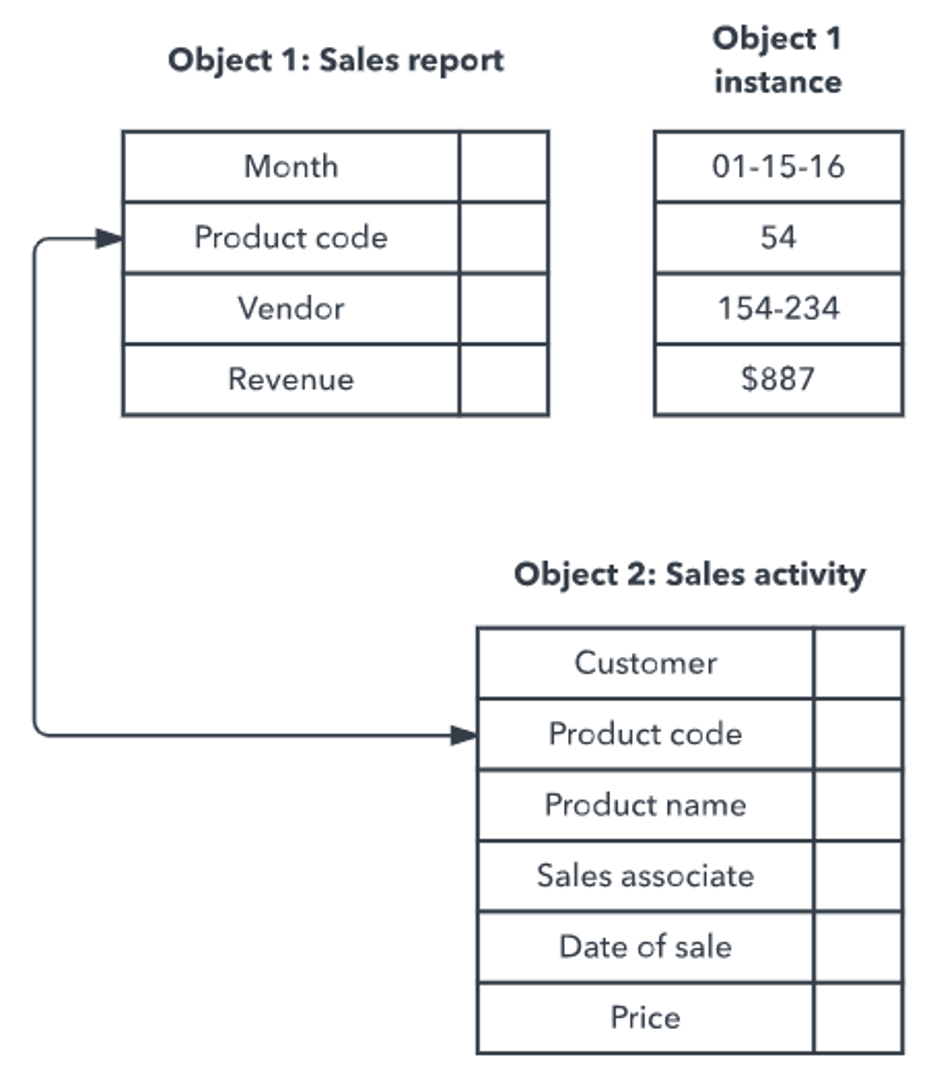

In the object-oriented model, data takes on the form of objects, much like real-world entities. Think of it as a digital representation of tangible items. This technique aligns with the way we perceive the world, making it intuitive. Objects encapsulate data and methods, allowing for self-contained units that interact with each other. In this data modeling approach, objects have specific traits and actions, much like unique puzzle pieces. It's often used in software development and complex data settings because it aligns well with coding tactics.

Entity-relationship model

The Entity-Relationship (ER) model is a key data modeling technique. Here, entities are like real-world objects, attributes are their features, and relationships show how they connect. Think of these types of models as a data map: the entities are your main points, the attributes describe them, and their associations show links in the schema. ER diagrams visually display this.

For instance, in a university database, entities might be students, courses, and teachers. Attributes could be names and titles. Connections would then show which students take which courses.

Physical data model

A physical data model is like a blueprint for how data is stored and organized in a real-world database. Imagine you're building a house: the physical data model is the detailed plan that shows where everything goes, like the walls, windows, and doors. In the world of databases, this means defining things like tables, columns, data types, and how all the pieces connect.

The physical data model is the final stage, where you get down to the nitty-gritty details. You'll optimize the database's performance, storage, and maintenance, making sure it's efficient and fast. Database administrators usually design these models, making sure they work well with the specific database software being used.

Logical data model

A logical data model is like a roadmap for understanding the relationships between different pieces of data in a database. Picture a puzzle where you have to connect the right pieces to create a complete image. The logical data model helps you figure out which pieces fit together and how they relate to each other.

In the logical data model, you'll define entities (like people, objects, or events) and their attributes (like name, age, or price), as well as the relationships between these entities. You won't worry about the specific details of how the data is stored or the technology being used; that's the job of the physical data model, which comes later.

Dimensional Model

The dimensional modeling technique shines when dealing with large volumes of data for analysis. Optimized for data warehouses and analytical processes, the dimensional model enables efficient querying and information reporting.

Imagine your data as a multidimensional cube, where facts represent measurable data (e.g., sales revenue), and dimensions provide contextual information (e.g., time, location, product) in your database. Combining these types in the schema creates a comprehensive view, helping you answer complex business questions.

In retail, for instance, you could analyze sales performance by measuring revenue against dimensions like time periods, store locations, and product categories. Another use case could be analyzing website traffic, using metrics like page views and time on site to measure user engagement. All these give your insight into dimensional aspects.

Data modelling can be achieved in various ways, but their basic concept remains the same.

How to Create a Data Model

Data modeling is a systematic journey that transforms real-world complexity into structured clarity. The data modeling process entails several well-defined stages in the schema, each contributing to the creation of an effective and meaningful data model program.

Step #1: Gather your data

This level serves as the foundation of a skyscraper. Just as architects evaluate the project's requirements and aspirations before laying the first brick, data modeling hinges on comprehending the core business needs of an organization.

By collaborating closely with stakeholders, identify the fundamental analytics elements that need representation. These include the key entities (or the distinct objects in the business domain like customers, products, and orders) that make up the operations of your enterprise, the attributes that define them, and the connections that tie them together. Gather all the crucial building blocks you need for a precise portrayal of your data.

Step #2: Conceptualize your designs

With the requirements firmly in place, it's time to sketch the blueprint. Conceptual data modeling serves as the foundational layer in the data modeling process. It simplifies real-world entities and their associations, enabling your stakeholders to grasp the overall design without getting bogged down in the technical specifics.

At the conceptual design level, users create a high-level model that captures the essence of the data landscape. Think of it as an artist's preliminary sketch! This model lays the groundwork, depicting essential entities and their relationships without delving into intricate details. The schema provides a visual guide that aligns stakeholders' understanding of the data structure and sets the tone for the subsequent stages.

Examples

In this conceptual model level, the emphasis is on the big picture. Major elements and their interactions take center stage. The entities are identified, and their connections are finally outlined, providing a model of how various components of the business relate to each other.

For instance, a conceptual data model for a retail business might identify the relationships between customers, products, and orders. But in a hospital management system, it's the patients, doctors, and appointments. The associations would center around patients having appointments with doctors.

For a social media platform, the main data entities would-be users, posts, and comments. The connections to examine would be instances where users create posts, comment on them, and the like.

Step #3: Create a logical model

A logical data model shows how data is related. It defines data items, like names or prices, as well as their connections. It doesn't focus on the technology used but on the data's layout. This makes it useful for many platforms and ensures clear representation in their analytics. This data modeling approach promotes independence from technology, which makes the data model flexible enough to be applied. It also results in well-defined elements for accurate reporting.

Examples

In the e-commerce context, for instance, a product in the tables might be tagged with ProductID, ProductName, and Price, which relate to its category. Meanwhile, patients in hospitals might have PatientID, FirstName, LastName, and Date of Birth (DOB) linked to their patient history.

Step #4: Create a physical model

Just as construction brings a blueprint to life, the physical design phase implements the logical data model into a tangible database system. A physical data model is like a blueprint for how data is stored and organized in a real-world database.

Moving from logic to implementation, physical modeling bridges the gap between concepts and the tangible. It involves translating the refined logical model into a specific database structure and molding it to the requirements of your chosen database system. Decisions are made about how data will be stored, indexed, and accessed. This analytics phase aligns the model with the technical capabilities of the chosen database platform, ensuring that data retrieval is efficient and meets the organization's needs.

Examples

For an e-commerce schema, for instance, this could mean creating "Product" tables in the database, complete with columns like ProductID (Primary Key), ProductName, Price, and even a CategoryID as a foreign key. In the same way, a hospital management database might require a "Patient" table with columns such as PatientID, FirstName, LastName, DOB, and a space for medical history. In social media, "Post" tables could include columns like PostID, Content, Timestamp, and a UserID as a foreign key.

Best Data Modeling Practices

In the realm of data modelling techniques, navigating this level of complexity requires a compass of best practices to guide the way. These principles ensure that your data models not only encapsulate information accurately but also remain adaptable to the dynamic needs of your business.

Organize your data

You can find answers to most business questions by organizing your data in terms of four elements—facts, dimensions, filters, and order.

Let’s understand this better with the help of an example: let’s assume that you run four e-commerce stores in four different locations of the world. It is the year-end, and you want to analyze which e-commerce store made the most sales. In such a scenario, you can organize your data over the last year. Facts will be the overall sales data of the last 1 year, the dimensions will be store location, the filter will be the last 12 months, and the order will be the top stores in decreasing order.

Organize your data properly using individual tables for facts and dimensions to enable quick analysis.

Make your data models simple and clear

Just as clear road signs make navigation straightforward, keeping your model simple and comprehensible is important. Opt for a minimalist approach and focus on the essentials. Extraneous elements clutter the map and can obscure the path to meaningful insights in the schema.

Design your data model to scale

Data models become outdated quicker than you expect. As such, your database designs should be scalable, ensuring they can accommodate new entities, information, traits, and relationships without major upheavals. By building with flexibility at the back of your mind, you future-proof your models, making them robust companions in an ever-changing business landscape.

The best practice here is to store your data models in an easy-to-manage repository such that you can make easy adjustments on the go.

Ensure the accuracy of your data

A data model without accurate information is like a painting without clarity. To maintain the integrity of your data models, make sure that each entity, attribute, and relationship is accurately represented. Regularly validate and update your models to reflect the latest data realities, aligning them closely with the actual data sources.

Always crosscheck before continuing

Data modeling is a big project, especially when you are dealing with huge amounts of data. Thus, you need to be cautious. Keep checking your data model before continuing to the next step.

For example, if you need to choose a primary key to identify each record in the dataset properly, make sure that you are picking the right attribute. Product ID could be one such attribute. Thus, even if two counts match, their product ID can help you distinguish each record. Keep checking if you are on the right track. Are product IDs the same too? In that case, you will need to look for another dataset to establish the relationship.

Only keep as much data as is needed

While you might be tempted to keep all the data with you, do not ever fall for this trap! Although storage is not a problem in this digital age, you might end up taking a toll because of machine performance. More often than not, just a small yet useful amount of data is enough to answer all the business-related questions.

Have a clear opinion on how many datasets you want to keep. Spending huge on hosting enormous amounts of data will only lead to performance issues, sooner or later.

Challenges and Considerations When Handling Data

Challenges are inevitable in the intricate landscape of data modeling. These hurdles demand careful attention and strategic maneuvering to ensure that your data models remain effective, relevant, and reliable.

Database quality

Reliable input data is crucial for effective data modeling. Poor-quality data seeps through the entire data modeling process, leading to inaccuracies and misleading insights. By instituting rigorous data validation and cleansing processes, you fortify the foundation and ensure that the models built upon it provide trustworthy information.

Changing data attributes

Adaptability is paramount when talking about data modeling. Business requirements evolve over time, and your data models must keep pace. Data models should be flexible enough to accommodate new entities, traits, and connections. Embrace agility; allow your models to transform alongside the shifting business landscape.

Data performance vs. normalization

Data modelers must balance the benefits of data organization (normalization) with the need for efficient data retrieval (performance). Over-normalization can lead to complex query paths, slowing down data retrieval. Striking the right equilibrium between normalized structures and optimized performance ensures that your data models remain nimble and responsive, allowing for timely analysis.

Scalability and data resource constraints

Things will be sweet initially, but they can become complex in no time. This is why it is highly recommended to keep your data models small and simple, to begin with.

Once you are sure of your initial models in terms of accuracy, gradually introduce more datasets. This helps you in two ways. First, you are able to spot any inconsistencies in the initial stages. Second, you can eliminate them on the go.

Cross-functional alignment

Align your data to make sure all teams are on the same page. In the vast canvas of an enterprise, data modeling serves as a bridge between various departments. Think of a large company as a bustling city. In this city, data modeling acts like bridges connecting different neighborhoods.

For example, the marketing team's "lead" might be the sales team's "potential customer." By aligning data representation, when marketing talks about leads generated from a campaign, sales immediately knows these are potential customers they need to follow up with. This ensures every department speaks the same language, preventing misunderstandings and streamlining operations.

Cross-functional alignment ensures that everyone interprets data consistently, fostering effective communication and decision-making.

Transform Your Data Into Insights

Data modeling techniques are crucial for structuring information in a way that supports effective decision-making and enhances the utilization of your analytics. To achieve the varied business intelligence insights and goals, it is recommended to model your data correctly and use appropriate tools to ensure the simplicity of the system.

Knowing the types of data modeling techniques, following best practices, and understanding the challenges involved are crucial to your organization’s success. Everyone in a business should learn data management and analytics to drive future growth.

As data models lay the groundwork for understanding relationships, modeling tools like PowerMetrics build upon this foundation, helping stakeholders uncover valuable data insights that drive business growth. Our software empowers you to seamlessly integrate your data models into actionable dashboards and reports. Magnify your impact, turn intricate connections into clear narratives, and transform raw data into valuable insights.